ChatGPT's Energy Use Lower Than Expected

ChatGPT, the chatbot from OpenAI, might not be the energy guzzler we thought it was. But, its energy use can vary a lot depending on how it's used and which AI models are answering the questions, according to a new study.

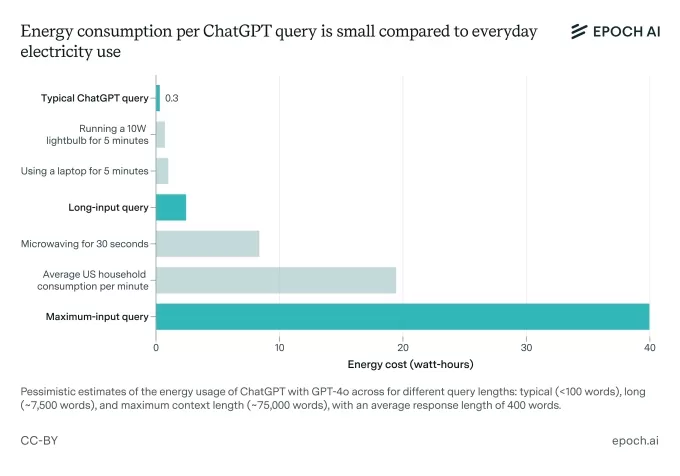

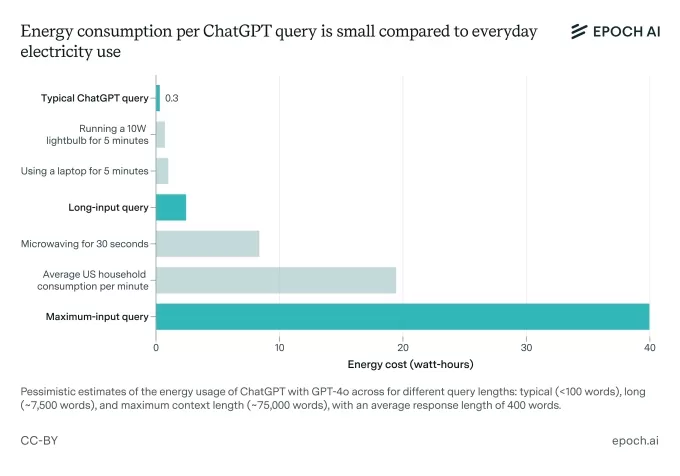

Epoch AI, a nonprofit research group, took a crack at figuring out how much juice a typical ChatGPT query uses. You might have heard that ChatGPT needs about 3 watt-hours to answer a single question, which is 10 times more than a Google search. But Epoch thinks that's a bit of an overstatement.

Using OpenAI's latest default model, GPT-4o, as a benchmark, Epoch found that the average ChatGPT query actually uses around 0.3 watt-hours. That's less than what many household appliances need.

"The energy use is really not a big deal compared to using normal appliances or heating or cooling your home, or driving a car," Joshua You, the data analyst at Epoch who did the analysis, told TechCrunch.

The energy use of AI, and its impact on the environment, is a hot topic as AI companies are looking to expand their data centers like crazy. Just last week, over 100 organizations signed an open letter urging the AI industry and regulators to make sure new AI data centers don't drain natural resources and force utilities to rely on nonrenewable energy sources.

You told TechCrunch that his analysis was sparked by what he saw as outdated research. He pointed out that the report that came up with the 3 watt-hours estimate assumed OpenAI was using older, less-efficient chips to run its models.

Image Credits:Epoch AI

"I've seen a lot of public discourse that correctly recognized that AI was going to consume a lot of energy in the coming years, but didn't really accurately describe the energy that was going to AI today," You said. "Also, some of my colleagues noticed that the most widely reported estimate of 3 watt-hours per query was based on fairly old research, and based on some napkin math seemed to be too high."

Sure, Epoch's 0.3 watt-hours figure is just an estimate, too. OpenAI hasn't shared the details needed to make a precise calculation.

The analysis also doesn't take into account the extra energy costs from ChatGPT features like image generation or processing long inputs. You admitted that "long input" ChatGPT queries — like those with long files attached — probably use more electricity upfront than a typical question.

You said he does expect the baseline power consumption of ChatGPT to go up, though.

"\[The\] AI will get more advanced, training this AI will probably require much more energy, and this future AI may be used much more intensely — handling much more tasks, and more complex tasks, than how people use ChatGPT today," You said.

While there have been some cool breakthroughs in AI efficiency lately, the scale at which AI is being used is expected to drive huge, power-hungry infrastructure growth. In the next two years, AI data centers might need nearly all of California's 2022 power capacity (68 GW), according to a Rand report. By 2030, training a frontier model could need power equivalent to eight nuclear reactors (8 GW), the report predicted.

ChatGPT alone reaches a ton of people, and that number's only growing, which means its server demands are massive. OpenAI, along with several investment partners, plans to spend billions on new AI data center projects over the next few years.

OpenAI's focus, along with the rest of the AI industry, is shifting to reasoning models. These models can do more tasks but need more computing power to run. Unlike models like GPT-4o, which answer queries almost instantly, reasoning models "think" for seconds to minutes before answering, which uses up more computing power — and thus more energy.

"Reasoning models will increasingly take on tasks that older models can't, and generate more \[data\] to do so, and both require more data centers," You said.

OpenAI has started to release more power-efficient reasoning models like o3-mini. But it seems unlikely, at least right now, that these efficiency gains will offset the increased power demands from reasoning models' "thinking" process and the growing use of AI around the world.

You suggested that if you're worried about your AI energy footprint, you should use apps like ChatGPT less often, or choose models that use less computing power — if that's possible.

"You could try using smaller AI models like \[OpenAI's\] GPT-4o-mini," You said, "and use them sparingly in a way that requires processing or generating a ton of data."

Related article

OpenAI outlines AI economy with public wealth funds, robot taxes, and four-day week

As governments struggle to manage the economic impact of superintelligent machines, OpenAI has released a set of policy proposals outlining how wealth and work could be reshaped in an "intelligence age." The ideas blend traditional left-leaning mecha

OpenAI outlines AI economy with public wealth funds, robot taxes, and four-day week

As governments struggle to manage the economic impact of superintelligent machines, OpenAI has released a set of policy proposals outlining how wealth and work could be reshaped in an "intelligence age." The ideas blend traditional left-leaning mecha

Greg Brockman reveals how Elon Musk departed OpenAI

In late August 2017, key figures at OpenAI—then a small nonprofit research lab—met to discuss how they would establish a for-profit entity to commercialize their technology and raise the capital needed to achieve AGI.Elon Musk was demanding full cont

Greg Brockman reveals how Elon Musk departed OpenAI

In late August 2017, key figures at OpenAI—then a small nonprofit research lab—met to discuss how they would establish a for-profit entity to commercialize their technology and raise the capital needed to achieve AGI.Elon Musk was demanding full cont

Pentagon signs deals with Nvidia, Microsoft, AWS to deploy AI on classified networks

After previously reaching agreements with Google, SpaceX, and OpenAI, the U.S. Defense Department announced Friday that it has now signed deals with Nvidia, Microsoft, Amazon Web Services, and Reflection AI to deploy their AI technologies and models

Related Special Topic Recommendations

Comments (34)

0/500

Pentagon signs deals with Nvidia, Microsoft, AWS to deploy AI on classified networks

After previously reaching agreements with Google, SpaceX, and OpenAI, the U.S. Defense Department announced Friday that it has now signed deals with Nvidia, Microsoft, Amazon Web Services, and Reflection AI to deploy their AI technologies and models

Related Special Topic Recommendations

Comments (34)

0/500

![PaulTaylor]()

¡Vaya, qué sorpresa! 😮 Siempre pensé que ChatGPT era un monstruo energético, pero parece que no es tan malo. Me pregunto cómo se compara con otros modelos de IA en términos de eficiencia. ¿Habrá un ranking público pronto? Sería muy útil para los usuarios conscientes del medio ambiente.

![PaulHill]()

Wow, ChatGPT’s energy use is lower than I thought! Still, it’s wild how much it varies by model and usage. Makes me wonder how sustainable scaling up AI will be in the long run. 🤔

![WillPerez]()

Surprising to see ChatGPT's energy use isn't as bad as hyped! Still, makes me wonder how much power those massive AI models really burn through when we’re all chatting away. 🤔

![CharlesRoberts]()

A energia usada pelo ChatGPT é surpreendentemente baixa! Pensei que fosse um devorador de energia, mas não é tão ruim assim. Ainda assim, é louco como pode variar muito dependendo do modelo e do uso. Faz você pensar no futuro da IA, né? 🤔 Continuem o bom trabalho, OpenAI!

ChatGPT, the chatbot from OpenAI, might not be the energy guzzler we thought it was. But, its energy use can vary a lot depending on how it's used and which AI models are answering the questions, according to a new study.

Epoch AI, a nonprofit research group, took a crack at figuring out how much juice a typical ChatGPT query uses. You might have heard that ChatGPT needs about 3 watt-hours to answer a single question, which is 10 times more than a Google search. But Epoch thinks that's a bit of an overstatement.

Using OpenAI's latest default model, GPT-4o, as a benchmark, Epoch found that the average ChatGPT query actually uses around 0.3 watt-hours. That's less than what many household appliances need.

"The energy use is really not a big deal compared to using normal appliances or heating or cooling your home, or driving a car," Joshua You, the data analyst at Epoch who did the analysis, told TechCrunch.

The energy use of AI, and its impact on the environment, is a hot topic as AI companies are looking to expand their data centers like crazy. Just last week, over 100 organizations signed an open letter urging the AI industry and regulators to make sure new AI data centers don't drain natural resources and force utilities to rely on nonrenewable energy sources.

You told TechCrunch that his analysis was sparked by what he saw as outdated research. He pointed out that the report that came up with the 3 watt-hours estimate assumed OpenAI was using older, less-efficient chips to run its models.

"I've seen a lot of public discourse that correctly recognized that AI was going to consume a lot of energy in the coming years, but didn't really accurately describe the energy that was going to AI today," You said. "Also, some of my colleagues noticed that the most widely reported estimate of 3 watt-hours per query was based on fairly old research, and based on some napkin math seemed to be too high."

Sure, Epoch's 0.3 watt-hours figure is just an estimate, too. OpenAI hasn't shared the details needed to make a precise calculation.

The analysis also doesn't take into account the extra energy costs from ChatGPT features like image generation or processing long inputs. You admitted that "long input" ChatGPT queries — like those with long files attached — probably use more electricity upfront than a typical question.

You said he does expect the baseline power consumption of ChatGPT to go up, though.

"\[The\] AI will get more advanced, training this AI will probably require much more energy, and this future AI may be used much more intensely — handling much more tasks, and more complex tasks, than how people use ChatGPT today," You said.

While there have been some cool breakthroughs in AI efficiency lately, the scale at which AI is being used is expected to drive huge, power-hungry infrastructure growth. In the next two years, AI data centers might need nearly all of California's 2022 power capacity (68 GW), according to a Rand report. By 2030, training a frontier model could need power equivalent to eight nuclear reactors (8 GW), the report predicted.

ChatGPT alone reaches a ton of people, and that number's only growing, which means its server demands are massive. OpenAI, along with several investment partners, plans to spend billions on new AI data center projects over the next few years.

OpenAI's focus, along with the rest of the AI industry, is shifting to reasoning models. These models can do more tasks but need more computing power to run. Unlike models like GPT-4o, which answer queries almost instantly, reasoning models "think" for seconds to minutes before answering, which uses up more computing power — and thus more energy.

"Reasoning models will increasingly take on tasks that older models can't, and generate more \[data\] to do so, and both require more data centers," You said.

OpenAI has started to release more power-efficient reasoning models like o3-mini. But it seems unlikely, at least right now, that these efficiency gains will offset the increased power demands from reasoning models' "thinking" process and the growing use of AI around the world.

You suggested that if you're worried about your AI energy footprint, you should use apps like ChatGPT less often, or choose models that use less computing power — if that's possible.

"You could try using smaller AI models like \[OpenAI's\] GPT-4o-mini," You said, "and use them sparingly in a way that requires processing or generating a ton of data."

OpenAI outlines AI economy with public wealth funds, robot taxes, and four-day week

As governments struggle to manage the economic impact of superintelligent machines, OpenAI has released a set of policy proposals outlining how wealth and work could be reshaped in an "intelligence age." The ideas blend traditional left-leaning mecha

OpenAI outlines AI economy with public wealth funds, robot taxes, and four-day week

As governments struggle to manage the economic impact of superintelligent machines, OpenAI has released a set of policy proposals outlining how wealth and work could be reshaped in an "intelligence age." The ideas blend traditional left-leaning mecha

Greg Brockman reveals how Elon Musk departed OpenAI

In late August 2017, key figures at OpenAI—then a small nonprofit research lab—met to discuss how they would establish a for-profit entity to commercialize their technology and raise the capital needed to achieve AGI.Elon Musk was demanding full cont

Greg Brockman reveals how Elon Musk departed OpenAI

In late August 2017, key figures at OpenAI—then a small nonprofit research lab—met to discuss how they would establish a for-profit entity to commercialize their technology and raise the capital needed to achieve AGI.Elon Musk was demanding full cont

Pentagon signs deals with Nvidia, Microsoft, AWS to deploy AI on classified networks

After previously reaching agreements with Google, SpaceX, and OpenAI, the U.S. Defense Department announced Friday that it has now signed deals with Nvidia, Microsoft, Amazon Web Services, and Reflection AI to deploy their AI technologies and models

Pentagon signs deals with Nvidia, Microsoft, AWS to deploy AI on classified networks

After previously reaching agreements with Google, SpaceX, and OpenAI, the U.S. Defense Department announced Friday that it has now signed deals with Nvidia, Microsoft, Amazon Web Services, and Reflection AI to deploy their AI technologies and models

¡Vaya, qué sorpresa! 😮 Siempre pensé que ChatGPT era un monstruo energético, pero parece que no es tan malo. Me pregunto cómo se compara con otros modelos de IA en términos de eficiencia. ¿Habrá un ranking público pronto? Sería muy útil para los usuarios conscientes del medio ambiente.

Wow, ChatGPT’s energy use is lower than I thought! Still, it’s wild how much it varies by model and usage. Makes me wonder how sustainable scaling up AI will be in the long run. 🤔

Surprising to see ChatGPT's energy use isn't as bad as hyped! Still, makes me wonder how much power those massive AI models really burn through when we’re all chatting away. 🤔

A energia usada pelo ChatGPT é surpreendentemente baixa! Pensei que fosse um devorador de energia, mas não é tão ruim assim. Ainda assim, é louco como pode variar muito dependendo do modelo e do uso. Faz você pensar no futuro da IA, né? 🤔 Continuem o bom trabalho, OpenAI!

Home

Home